Dajinn08

New Member

- Total Posts : 18

- Reward points : 0

- Joined: 2018/03/21 18:11:24

- Status: offline

- Ribbons : 0

For overclocking a 1080 Ti SC2, I'm trying to understand what the behavior is supposed to be.

Deferring from technical explanations of turbo boost, I want to discuss observations and how it should perform because from witnessing the core clock during games/benchmarks, I am still confused.

Does the core clock correlate with the load on the GPU? e.g. 100% GPU load means the GPU tries everything it can to squeeze out as much performance from the parameters set? (power / temp limit, core clock offset, voltage) Or is it load + temp or strictly temp?

The settings for my SC2 are 120% power, 90c temp target, voltage maxed, and core offset of +50. Temps chill at around 70c. Whenever I make changes to these and click apply, I see the GPU go to it's theoretical "max" for a split second which is around 2025 mhz. However in a game like FFXV, it starts off reaching 2000 mhz and the card seems to settle back down to around 1936, 1942, 1960 mhz, never really going above those again usually, maybe for like 1 second out of a string of 20-30 minutes it'll boost up to 1987 mhz but it doesn't stick there. All the while, card is still sitting at 70c.

The core clock of 1936/1942/1960 is basically what I see if I don't even overclock the card and I just set it to 120% power 90c temp targets. I have the priority set to temp however even though the temps are 20 degrees below the target, I cannot seem to get any overclock on the core to actually stick.

So that leaves me wondering if current core clock is associated with GPU load and if the clock would be higher if the game/application was more demanding?

If say, the temps of my card were actually 60 or 65c, would I see over 2000 mhz more frequently with the +50 core offset? If this is the case then what is the point of the temp target and setting it as the priority? You would think that the card would push itself to its max if it's under this target, otherwise to me it doesn't make much sense.

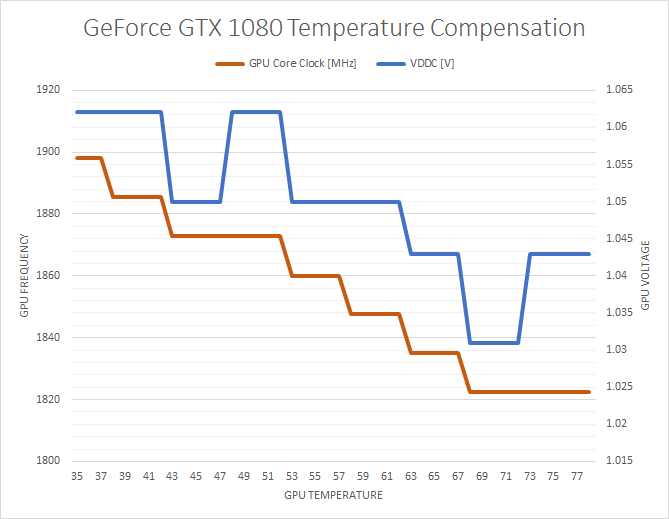

Or is it that GPU boost has reductions in maximum clock speeds based on temperature tiers?

|

squall-leonhart

CLASSIFIED Member

- Total Posts : 2904

- Reward points : 0

- Joined: 2009/07/27 19:57:03

- Location: Australia

- Status: offline

- Ribbons : 24

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/13 05:31:21

(permalink)

CPU:Intel Xeon x5690 @ 4.2Ghz, Mainboard:Asus Rampage III Extreme, Memory:48GB Corsair Vengeance LP 1600

Video:EVGA Geforce GTX 1080 Founders Edition, NVidia Geforce GTX 1060 Founders Edition

Monitor:BenQ G2400WD, BenQ BL2211, Sound:Creative XFI Titanium Fatal1ty Pro

SDD:Crucial MX300 275, Crucial MX300 525, Crucial MX300 1000

HDD:500GB Spinpoint F3, 1TB WD Black, 2TB WD Red, 1TB WD Black

Case:NZXT Phantom 820, PSU:Seasonic X-850, OS:Windows 7 SP1

Cooler: ThermalRight Silver Arrow IB-E Extreme

|

Dajinn08

New Member

- Total Posts : 18

- Reward points : 0

- Joined: 2018/03/21 18:11:24

- Status: offline

- Ribbons : 0

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/13 05:42:07

(permalink)

Already read that, it doesn't really answer how temps actually play into things. Like I was asking, what is the point of the temp and power limits if boost is still keeping itself in check based on creeping up temperatures say from 60...to 65...to 70? Setting a high limit and also making it the priority to me means that the card performs the same UNTIL it reaches that limit.

post edited by Dajinn08 - 2018/04/13 05:53:14

|

DeathAngel74

FTW Member

- Total Posts : 1263

- Reward points : 0

- Joined: 2015/03/04 22:16:53

- Location: With the evil monkey in your closet!!

- Status: offline

- Ribbons : 10

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/13 06:32:28

(permalink)

The cooler the card is running, the higher it will boost without throttling. As an example, my cards will boost to 2075MHz until around 50-55c, then drop to 2050MHz. By that time, the fans are at 100% and they don't drop anymore after that though.

Hope that helps.

Carnage specs: Motherboard: ASUS ROG STRIX X299-E GAMING | Processor: Intel® Core™ i7-7820x | Memory Channels#1 and #3: Corsair Vengeance RGB 4x8GB DDR4 DRAM 3200MHz | Memory Channels#2 and #4: Corsair Vengeance LPX Black 4x8GB DDR4 DRAM 3200 MHz | GPU: eVGA 1080 TI FTW3 Hybrid | PhysX: eVGA 1070 SC2 | SSD#1: Samsung 960 EVO 256GB m.2 nVME(Windows/boot) | SSD#2&3: OCZ TRION 150 480GB SATAx2(RAID0-Games) | SSD#4: ADATA Premier SP550 480GB SATA(Storage) | CPU Cooler: Thermaltake Water 3.0 RGB 360mm AIO LCS | Case: Thermaltake X31 RGB | Power Supply: Thermaltake Toughpower DPS G RGB 1000W Titanium | Keyboard: Razer Ornato Chroma | Mouse: Razer DeathAdder Elite Chroma | Mousepad: Razer Firefly Chroma | Operating System#1: Windows 7 SP1 Ultimate X64 | Operating System#2: Linux Mint 18.2 Sonya (3DS Homebrew/Build Environment)

|

ty_ger07

Insert Custom Title Here

- Total Posts : 21174

- Reward points : 0

- Joined: 2008/04/10 23:48:15

- Location: traveler

- Status: offline

- Ribbons : 270

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/13 06:46:01

(permalink)

Dajinn08Does the core clock correlate with the load on the GPU? e.g. 100% GPU load means the GPU tries everything it can to squeeze out as much performance from the parameters set? Yup. You will sometimes see perfcap reason: util. This means that the card isn't boosting higher because the utilization is low enough that boosting higher would have no affect other than to increase performance and thus further reduce utilization. So that leaves me wondering if current core clock is associated with GPU load and if the clock would be higher if the game/application was more demanding? Typically, the card will already be at 100% load ("99%" is essentially 100%). Thus, there is no higher load possible. If you try to demand more from it, the framerate will just drop but it won't boost higher since it is already at 100% load and already boosted as much as it could for that amount of load. It's only when boost is limited by utilization that more load will result in more boost.

ASRock Z77 • Intel Core i7 3770K • EVGA GTX 1080 • Samsung 850 Pro • Seasonic PRIME 600W Titanium

My EVGA Score: 1546 • Zero Associates Points • I don't shill

|

HeavyHemi

Insert Custom Title Here

- Total Posts : 15665

- Reward points : 0

- Joined: 2008/11/28 20:31:42

- Location: Western Washington

- Status: offline

- Ribbons : 135

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/13 10:37:47

(permalink)

Dajinn08

For overclocking a 1080 Ti SC2, I'm trying to understand what the behavior is supposed to be.

Deferring from technical explanations of turbo boost, I want to discuss observations and how it should perform because from witnessing the core clock during games/benchmarks, I am still confused.

Does the core clock correlate with the load on the GPU? e.g. 100% GPU load means the GPU tries everything it can to squeeze out as much performance from the parameters set? (power / temp limit, core clock offset, voltage) Or is it load + temp or strictly temp?

The settings for my SC2 are 120% power, 90c temp target, voltage maxed, and core offset of +50. Temps chill at around 70c. Whenever I make changes to these and click apply, I see the GPU go to it's theoretical "max" for a split second which is around 2025 mhz. However in a game like FFXV, it starts off reaching 2000 mhz and the card seems to settle back down to around 1936, 1942, 1960 mhz, never really going above those again usually, maybe for like 1 second out of a string of 20-30 minutes it'll boost up to 1987 mhz but it doesn't stick there. All the while, card is still sitting at 70c.

The core clock of 1936/1942/1960 is basically what I see if I don't even overclock the card and I just set it to 120% power 90c temp targets. I have the priority set to temp however even though the temps are 20 degrees below the target, I cannot seem to get any overclock on the core to actually stick.

So that leaves me wondering if current core clock is associated with GPU load and if the clock would be higher if the game/application was more demanding?

If say, the temps of my card were actually 60 or 65c, would I see over 2000 mhz more frequently with the +50 core offset? If this is the case then what is the point of the temp target and setting it as the priority? You would think that the card would push itself to its max if it's under this target, otherwise to me it doesn't make much sense.

Or is it that GPU boost has reductions in maximum clock speeds based on temperature tiers?

And... https://www.anandtech.com...ders-edition-review/15

EVGA X99 FTWK / i7 6850K @ 4.5ghz / RTX 3080Ti FTW Ultra / 32GB Corsair LPX 3600mhz / Samsung 850Pro 256GB / Be Quiet BN516 Straight Power 12-1000w 80 Plus Platinum / Window 10 Pro

|

Sajin

EVGA Forum Moderator

- Total Posts : 49170

- Reward points : 0

- Joined: 2010/06/07 21:11:51

- Location: Texas, USA.

- Status: online

- Ribbons : 199

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/13 12:19:46

(permalink)

Dajinn08

Or is it that GPU boost has reductions in maximum clock speeds based on temperature tiers?

Yep. Temp, power, load & set voltage all affect how the card will boost.

|

Dajinn08

New Member

- Total Posts : 18

- Reward points : 0

- Joined: 2018/03/21 18:11:24

- Status: offline

- Ribbons : 0

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/14 09:48:33

(permalink)

I kind of wish the big OEMs would stop doing these weird boost things, at least with Intel if you overclock the whole turbo boost is essentially taken out of the equation. But with this it's just kind of awkward. This sounds weird but I really miss the days of just setting a slider and having a card work at the frequency set. I guess the big advent of boost was for marketing and for the consumer's benefit, the first being that you could technically undersell the potential of a card and hype up a market base when it outperformed stock expectations, the latter being that for consumers who don't overclock, they can still reap some benefit of automatically enhanced frequencies. I guess they're both one in the same. I took off my front and side panels to do thermal testing, even keeping the card at 60-62c, it just doesn't ever seem like an overclocked / offset frequency "sticks", the card will boost up to the max frequency when I tab back into a benchmark for like 2 -3 seconds and then it just kind of goes back down into the 1930-1960 range, sometimes hitting 1987 for a split second, which is what the card does stock anyway. Again, why bother even having these overclocking offsets. Temperature tiering based boost clocks doesn't really make sense to me, it's like the architecture imposes this weird self limitation but if the current required to power a particular clock rate doesn't result increased temps (obviously this is going to be binning dependent to some extents), why not just stick at the boosted frequency? This is why the boost tech is lost on me and why I wish you could just set a slider and monitor temps and if they're okay, the card should just stay at the set clock. To be fair to EVGA and Pascal architecture, I've had the same complaint about GPU Boost since the 600 series, however, I don't think I've ever seen a more finnicky implementation since the 1080 Ti. Maybe they could start selling the high end cards with BIOS sliders that let you turn off boost and just go vanilla.

post edited by Dajinn08 - 2018/04/14 09:50:39

|

ty_ger07

Insert Custom Title Here

- Total Posts : 21174

- Reward points : 0

- Joined: 2008/04/10 23:48:15

- Location: traveler

- Status: offline

- Ribbons : 270

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/14 10:05:39

(permalink)

When the frequency drops, what is the perfcap reason? Once you know what the perfcap reason is, you know what to adjust.

ASRock Z77 • Intel Core i7 3770K • EVGA GTX 1080 • Samsung 850 Pro • Seasonic PRIME 600W Titanium

My EVGA Score: 1546 • Zero Associates Points • I don't shill

|

Dajinn08

New Member

- Total Posts : 18

- Reward points : 0

- Joined: 2018/03/21 18:11:24

- Status: offline

- Ribbons : 0

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/14 10:55:10

(permalink)

ty_ger07

When the frequency drops, what is the perfcap reason? Once you know what the perfcap reason is, you know what to adjust.

I see VRel and Pwr usually, mostly VRel. Voltage slider set to max and power cap set to 120%, temp hovering between 60-63c.

|

HeavyHemi

Insert Custom Title Here

- Total Posts : 15665

- Reward points : 0

- Joined: 2008/11/28 20:31:42

- Location: Western Washington

- Status: offline

- Ribbons : 135

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/14 11:27:20

(permalink)

Dajinn08

I kind of wish the big OEMs would stop doing these weird boost things, at least with Intel if you overclock the whole turbo boost is essentially taken out of the equation. But with this it's just kind of awkward. This sounds weird but I really miss the days of just setting a slider and having a card work at the frequency set.

I guess the big advent of boost was for marketing and for the consumer's benefit, the first being that you could technically undersell the potential of a card and hype up a market base when it outperformed stock expectations, the latter being that for consumers who don't overclock, they can still reap some benefit of automatically enhanced frequencies. I guess they're both one in the same.

I took off my front and side panels to do thermal testing, even keeping the card at 60-62c, it just doesn't ever seem like an overclocked / offset frequency "sticks", the card will boost up to the max frequency when I tab back into a benchmark for like 2 -3 seconds and then it just kind of goes back down into the 1930-1960 range, sometimes hitting 1987 for a split second, which is what the card does stock anyway. Again, why bother even having these overclocking offsets.

Temperature tiering based boost clocks doesn't really make sense to me, it's like the architecture imposes this weird self limitation but if the current required to power a particular clock rate doesn't result increased temps (obviously this is going to be binning dependent to some extents), why not just stick at the boosted frequency? This is why the boost tech is lost on me and why I wish you could just set a slider and monitor temps and if they're okay, the card should just stay at the set clock.

To be fair to EVGA and Pascal architecture, I've had the same complaint about GPU Boost since the 600 series, however, I don't think I've ever seen a more finnicky implementation since the 1080 Ti. Maybe they could start selling the high end cards with BIOS sliders that let you turn off boost and just go vanilla.

Intel you still have C-States and 'boost clocks' unless you also disable those manually. Have you considered KBoost? The disadvantage is locking your clocks at a higher state all the time. Also, using the voltage curve method you can find a clock that is relatively stable within a bin or two of your target frequency. You just have to experiment...just like with CPU overclocking. The main difference is being voltage limited to 1.093v. You can't add more voltage over that without mods. I never hit that. I'm at 2037 @ 1.0v using the voltage curve.

EVGA X99 FTWK / i7 6850K @ 4.5ghz / RTX 3080Ti FTW Ultra / 32GB Corsair LPX 3600mhz / Samsung 850Pro 256GB / Be Quiet BN516 Straight Power 12-1000w 80 Plus Platinum / Window 10 Pro

|

Valtrius Malleus

iCX Member

- Total Posts : 290

- Reward points : 0

- Joined: 2017/03/16 19:46:33

- Status: offline

- Ribbons : 2

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/14 12:00:05

(permalink)

Dajinn08

I kind of wish the big OEMs would stop doing these weird boost things, at least with Intel if you overclock the whole turbo boost is essentially taken out of the equation. But with this it's just kind of awkward. This sounds weird but I really miss the days of just setting a slider and having a card work at the frequency set.

I couldn't agree more. With Intel, you get the clocks you want until it hits max Tj and then it throttles. If throttling doesn't work for some reason, like you forgot to put on a heatsink, then it would shut down the PC. nVidias Turbo boost thing is pathetic. Nothing more than a marketing gimmick. If AMD doesn't have it next year when I upgrade my cards then I'll be jumping on team red. The voltage slider sort of shifts the temp/voltage curve higher so you will get higher volts at the same temperature by increasing the slider. HeavyHemi

The main difference is being voltage limited to 1.093v. You can't add more voltage over that without mods. I never hit that. I'm at 2037 @ 1.0v using the voltage curve.

That's damn impressive. I can only get 1987MHz at 1.0v but I can get +175/+750 (on a FE) even at 1.093v so yours would be capable of hitting higher, at least in theory. The best way to overclock pascal is to literally overclock every single bin on the voltage curve. Find out the max frequency for each voltage so it doesn't matter where Boost 3.0 draw the temp-line, the card is always performing at it's maximum. The benfits are neglible ingame though and it takes a while to find a stable overlock for each bin. On top of that, the curve can move on it own... thanks to Boost 3.0! So the offset you had set which would get you a stable overclock might be lowered, or it might go higher and cause a crash which is why I hate it so much.

|

DeathAngel74

FTW Member

- Total Posts : 1263

- Reward points : 0

- Joined: 2015/03/04 22:16:53

- Location: With the evil monkey in your closet!!

- Status: offline

- Ribbons : 10

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/15 08:21:33

(permalink)

I'm at 2037 @ 1.037v-1.043v/+75 core/+500 memory on both 1070SC2 and 1080Ti.

Carnage specs: Motherboard: ASUS ROG STRIX X299-E GAMING | Processor: Intel® Core™ i7-7820x | Memory Channels#1 and #3: Corsair Vengeance RGB 4x8GB DDR4 DRAM 3200MHz | Memory Channels#2 and #4: Corsair Vengeance LPX Black 4x8GB DDR4 DRAM 3200 MHz | GPU: eVGA 1080 TI FTW3 Hybrid | PhysX: eVGA 1070 SC2 | SSD#1: Samsung 960 EVO 256GB m.2 nVME(Windows/boot) | SSD#2&3: OCZ TRION 150 480GB SATAx2(RAID0-Games) | SSD#4: ADATA Premier SP550 480GB SATA(Storage) | CPU Cooler: Thermaltake Water 3.0 RGB 360mm AIO LCS | Case: Thermaltake X31 RGB | Power Supply: Thermaltake Toughpower DPS G RGB 1000W Titanium | Keyboard: Razer Ornato Chroma | Mouse: Razer DeathAdder Elite Chroma | Mousepad: Razer Firefly Chroma | Operating System#1: Windows 7 SP1 Ultimate X64 | Operating System#2: Linux Mint 18.2 Sonya (3DS Homebrew/Build Environment)

|

HeavyHemi

Insert Custom Title Here

- Total Posts : 15665

- Reward points : 0

- Joined: 2008/11/28 20:31:42

- Location: Western Washington

- Status: offline

- Ribbons : 135

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/15 09:43:56

(permalink)

Valtrius Malleus

Dajinn08

I kind of wish the big OEMs would stop doing these weird boost things, at least with Intel if you overclock the whole turbo boost is essentially taken out of the equation. But with this it's just kind of awkward. This sounds weird but I really miss the days of just setting a slider and having a card work at the frequency set.

I couldn't agree more. With Intel, you get the clocks you want until it hits max Tj and then it throttles. If throttling doesn't work for some reason, like you forgot to put on a heatsink, then it would shut down the PC. nVidias Turbo boost thing is pathetic. Nothing more than a marketing gimmick. If AMD doesn't have it next year when I upgrade my cards then I'll be jumping on team red.

The voltage slider sort of shifts the temp/voltage curve higher so you will get higher volts at the same temperature by increasing the slider.

HeavyHemi

The main difference is being voltage limited to 1.093v. You can't add more voltage over that without mods. I never hit that. I'm at 2037 @ 1.0v using the voltage curve.

That's damn impressive. I can only get 1987MHz at 1.0v but I can get +175/+750 (on a FE) even at 1.093v so yours would be capable of hitting higher, at least in theory.

The best way to overclock pascal is to literally overclock every single bin on the voltage curve. Find out the max frequency for each voltage so it doesn't matter where Boost 3.0 draw the temp-line, the card is always performing at it's maximum. The benfits are neglible ingame though and it takes a while to find a stable overlock for each bin. On top of that, the curve can move on it own... thanks to Boost 3.0! So the offset you had set which would get you a stable overclock might be lowered, or it might go higher and cause a crash which is why I hate it so much.

I've never had the curve move on it's own and I don't use the percentage slider. I simply find (the same with CPU overclocking) the stable clocks and voltages I want to use. On this GPU, I've had couple of random crashes at 2050mhz 1.0v. while gaming. Metro Redux is good for that. So I settled on 2037mhz at 1.0v for absolute stability. Of course I can hit higher clocks. I am also aware though extensive testing what is 100% stable on my system at all times. I Personally cannot see the utility of changing or adjusting clocks per game. I could see doing that for benching where under some conditions I can run as high as 2114mhz. I don't know why you'd switch to AMD. The performance is far worse. That makes no sense when, if you know what you're doing, you can mimic most of K-Boost without the drawbacks. I mean from my scores on benches... it's pretty clear I know what I'm doing.

post edited by HeavyHemi - 2018/04/15 09:46:54

EVGA X99 FTWK / i7 6850K @ 4.5ghz / RTX 3080Ti FTW Ultra / 32GB Corsair LPX 3600mhz / Samsung 850Pro 256GB / Be Quiet BN516 Straight Power 12-1000w 80 Plus Platinum / Window 10 Pro

|

Dajinn08

New Member

- Total Posts : 18

- Reward points : 0

- Joined: 2018/03/21 18:11:24

- Status: offline

- Ribbons : 0

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/15 11:49:36

(permalink)

How do you change the voltage?

|

Sajin

EVGA Forum Moderator

- Total Posts : 49170

- Reward points : 0

- Joined: 2010/06/07 21:11:51

- Location: Texas, USA.

- Status: online

- Ribbons : 199

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/15 12:47:45

(permalink)

Dajinn08

How do you change the voltage?

Afterburner voltage/frequency curve editor (CTRL+F), then press L after selecting a V/F point inside the editor.

post edited by Sajin - 2018/04/15 12:53:41

|

Ranmacanada

SSC Member

- Total Posts : 992

- Reward points : 0

- Joined: 2011/09/22 10:44:47

- Status: offline

- Ribbons : 3

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/15 20:18:30

(permalink)

With my 1080ti SC hybrid, it starts throttling the clock at 40C, from 2075 to 2063. The problem you are having is because of heat, nothing more. As the card gets hotter it will throttle down more and more. It's the way nvidia designed Pascal. Even my 1080FTW will throttle down from 2012 to 1985 as soon as it hits 60C. The only way you'll be able to get your settings to stick is to cool it down more.

ASUS TUF GAMING X570-PLUS (WI-FI) AMD Ryzen 2700 Fold for the CURE! EVGA 1080 FTW EVGA 1080Ti Hybrid

|

Dajinn08

New Member

- Total Posts : 18

- Reward points : 0

- Joined: 2018/03/21 18:11:24

- Status: offline

- Ribbons : 0

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/16 04:30:31

(permalink)

So pointless. Boost is garbage. Just let me stay at the frequency I want if the temp is fine, jeez.

post edited by Sajin - 2018/04/16 14:34:46

|

Ranmacanada

SSC Member

- Total Posts : 992

- Reward points : 0

- Joined: 2011/09/22 10:44:47

- Status: offline

- Ribbons : 3

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/16 08:06:35

(permalink)

Dajinn08

So pointless. Boost is garbage. Just let me stay at the frequency I want if the temp is fine, jeez.

Boost is not garbage, it's you people who insist on overclocking your already overclocked part just that extra 30mhz which will make NO difference at all, that complain about boost. All EVGA cards boost more than the specs of GPU Boost 3.0, yet certain people want to try to get that extra little bit because they think more is better. Well it's not. Often times your efforts to overclock your card will introduce instability, and the card will actually perform worse. Look at your stock clocks and stock boost. The card you have is guaranteed to boost to 1670. The fact that it's in the 1900's means your card is boosting for more than stock, and that should be good enough for you. What you're doing is complaining that your card, which is self overclocking almost 300mhz more than stock, can't go another 20%. It's like all those ryzen owners who complain they can't hit 4gig on their chips when they self boost to 3.8.

post edited by Sajin - 2018/04/16 14:35:04

ASUS TUF GAMING X570-PLUS (WI-FI) AMD Ryzen 2700 Fold for the CURE! EVGA 1080 FTW EVGA 1080Ti Hybrid

|

HeavyHemi

Insert Custom Title Here

- Total Posts : 15665

- Reward points : 0

- Joined: 2008/11/28 20:31:42

- Location: Western Washington

- Status: offline

- Ribbons : 135

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/16 08:16:33

(permalink)

Dajinn08

So pointless. Boost is garbage. Just let me stay at the frequency I want if the temp is fine, jeez.

Please clean your post up. You're entitled to your opinion, you're not entitled to be nasty or use acronyms to hide expletives on a family friendly forum. We've explained to you how to accomplish what you want. But apparently if it takes more than just pressing a button, you don't want to hear it. You can overclock the GPU to where it will hold the frequency you want. It just takes time and a bit of effort, just as with overclocking your CPU.

post edited by Sajin - 2018/04/16 14:35:27

EVGA X99 FTWK / i7 6850K @ 4.5ghz / RTX 3080Ti FTW Ultra / 32GB Corsair LPX 3600mhz / Samsung 850Pro 256GB / Be Quiet BN516 Straight Power 12-1000w 80 Plus Platinum / Window 10 Pro

|

Dajinn08

New Member

- Total Posts : 18

- Reward points : 0

- Joined: 2018/03/21 18:11:24

- Status: offline

- Ribbons : 0

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/16 08:22:23

(permalink)

I find an extra 2 - 5 fps to be a significant advantage, and worth an extra 30 Mhz, the reason is because those numbers are relative in different manners, 2 to 5 FPS could be 2 to 5 FPS from keeping you from dipping under 30, or put another way, it could be 2 to 5 FPS "Frame buffer" keeping you further away from that dreaded 30 fps dip, or from dipping under 60 fps if you game with vsync on an older 60 hz monitor, e.g. the card has the horsepower "on hand" to handle changes in 3D scenery that would place an otherwise uncommonly high demand on the GPU's capabilities causing a more substantial frame drop than normal. HeavyHemi

Dajinn08

So pointless. Boost is garbage. Just let me stay at the frequency I want if the temp is fine, jeez.

Please clean your post up. You're entitled to your opinion, you're not entitled to be nasty or use acronyms to hide expletives on a family friendly forum. We've explained to you how to accomplish what you want. But apparently if it takes more than just pressing a button, you don't want to hear it. You can overclock the GPU to where it will hold the frequency you want. It just takes time and a bit of effort, just as with overclocking your CPU.

I saw the post about modifying the voltage curve, I just haven't had time to do it yet. I didn't write it off as you're suggesting, I just wasn't aware of it til the other day and will report back at some point with results.

post edited by Sajin - 2018/04/16 14:35:43

|

ty_ger07

Insert Custom Title Here

- Total Posts : 21174

- Reward points : 0

- Joined: 2008/04/10 23:48:15

- Location: traveler

- Status: offline

- Ribbons : 270

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/16 09:36:55

(permalink)

GPU Boost 3.0 is not garbage. Boost is great. As said, it's self-overclocking. Even better, it's adjustable overclocking which changes as the the card's overclocking limits change.

If all you had was a slider -- like back in the good old days -- you could overclock it to 1950 MHz. But then lets say that you discover that your overclock works nicely until the card reaches 70°c, and then it crashes. So, you lower your overclock to 1925 MHz. Now it works without issue. See the problem? Your total overclock is now actually lower than it could have been with GPU Boost 3.0. With GPU Boost 3.0, it overclocks to 1950 MHz when it can, so you get extra FPS when you can, but lowers to 1925 MHz when it has to in order to keep the card from crashing. Better. Certainly.

ASRock Z77 • Intel Core i7 3770K • EVGA GTX 1080 • Samsung 850 Pro • Seasonic PRIME 600W Titanium

My EVGA Score: 1546 • Zero Associates Points • I don't shill

|

Valtrius Malleus

iCX Member

- Total Posts : 290

- Reward points : 0

- Joined: 2017/03/16 19:46:33

- Status: offline

- Ribbons : 2

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/16 11:28:45

(permalink)

ty_ger07

GPU Boost 3.0 is not garbage. Boost is great. As said, it's self-overclocking. Even better, it's adjustable overclocking which changes as the the card's overclocking limits change.

If all you had was a slider -- like back in the good old days -- you could overclock it to 1950 MHz. But then lets say that you discover that your overclock works nicely until the card reaches 70°c, and then it crashes. So, you lower your overclock to 1925 MHz. Now it works without issue. See the problem? Your total overclock is now actually lower than it could have been with GPU Boost 3.0. With GPU Boost 3.0, it overclocks to 1950 MHz when it can, so you get extra FPS when you can, but lowers to 1925 MHz when it has to in order to keep the card from crashing. Better. Certainly.

The issue is that Boost 3.0 overcompensates so you're not getting a better overlock. It's just something else that's been dumbed down to appeal to the masses.

|

ty_ger07

Insert Custom Title Here

- Total Posts : 21174

- Reward points : 0

- Joined: 2008/04/10 23:48:15

- Location: traveler

- Status: offline

- Ribbons : 270

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/16 12:48:51

(permalink)

Valtrius Malleus

ty_ger07

GPU Boost 3.0 is not garbage. Boost is great. As said, it's self-overclocking. Even better, it's adjustable overclocking which changes as the the card's overclocking limits change.

If all you had was a slider -- like back in the good old days -- you could overclock it to 1950 MHz. But then lets say that you discover that your overclock works nicely until the card reaches 70°c, and then it crashes. So, you lower your overclock to 1925 MHz. Now it works without issue. See the problem? Your total overclock is now actually lower than it could have been with GPU Boost 3.0. With GPU Boost 3.0, it overclocks to 1950 MHz when it can, so you get extra FPS when you can, but lowers to 1925 MHz when it has to in order to keep the card from crashing. Better. Certainly.

The issue is that Boost 3.0 overcompensates so you're not getting a better overlock. It's just something else that's been dumbed down to appeal to the masses.

Not in my experience. The initial boost may be a little low, sure. The initial power safety margin may have some want tor a little more aggressiveness, sure. But once you increase the power % where you want it and increase the boost % to the max stable, the way the boost drops as temperature increases seems just about right to me. Try it for yourself. Boost to +100 (or whatever your max stable is) see the core frequency is 2000 at 50°c (or whatever it actually is), see that the core frequency drops to 1970 at 70°c (or whatever it actually is), and then without allowing core temperature to drop, boost to +130 (or whatever) so that core frequency is back to 2000 MHz (or whatever) at 70°c (or whatever). Crash. See? These are just example numbers, but my point should be clear and easily substituted for the max stable values of your video card to prove the point.

post edited by ty_ger07 - 2018/04/16 12:53:25

ASRock Z77 • Intel Core i7 3770K • EVGA GTX 1080 • Samsung 850 Pro • Seasonic PRIME 600W Titanium

My EVGA Score: 1546 • Zero Associates Points • I don't shill

|

HeavyHemi

Insert Custom Title Here

- Total Posts : 15665

- Reward points : 0

- Joined: 2008/11/28 20:31:42

- Location: Western Washington

- Status: offline

- Ribbons : 135

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/16 14:21:38

(permalink)

Valtrius Malleus

ty_ger07

GPU Boost 3.0 is not garbage. Boost is great. As said, it's self-overclocking. Even better, it's adjustable overclocking which changes as the the card's overclocking limits change.

If all you had was a slider -- like back in the good old days -- you could overclock it to 1950 MHz. But then lets say that you discover that your overclock works nicely until the card reaches 70°c, and then it crashes. So, you lower your overclock to 1925 MHz. Now it works without issue. See the problem? Your total overclock is now actually lower than it could have been with GPU Boost 3.0. With GPU Boost 3.0, it overclocks to 1950 MHz when it can, so you get extra FPS when you can, but lowers to 1925 MHz when it has to in order to keep the card from crashing. Better. Certainly.

The issue is that Boost 3.0 overcompensates so you're not getting a better overlock. It's just something else that's been dumbed down to appeal to the masses.

You must also realize that automatic clock control is of course going to have some margin. Otherwise RMA rates go through the roof. This is exactly the same concept that occurs with CPU. Pretty much any recent CPU will over clock by ~20%. But you have to do that yourself by trial and error. By MANUALLY adjusting for your individual part you can typically gain some performance. The same for a GPU. Also! These GPU's are designed to operate within a certain power draw. Increasing the clocks increasing power draw quite substantially. That's another purpose of GPU Boost 3.0. Even at my moderate overclock, I'm pulling ~300 watts peak. And lastly, of course, it is 'dumbed down for the masses'. Who do you think buys the vast majority of their products? The masses that just stick one in their machine, and that it. Sometimes, I think *enthusiasts have unrealistic expectations of what should be provided for a mass produced product. Custom costs....did you consider the KingPin model?

EVGA X99 FTWK / i7 6850K @ 4.5ghz / RTX 3080Ti FTW Ultra / 32GB Corsair LPX 3600mhz / Samsung 850Pro 256GB / Be Quiet BN516 Straight Power 12-1000w 80 Plus Platinum / Window 10 Pro

|

Sajin

EVGA Forum Moderator

- Total Posts : 49170

- Reward points : 0

- Joined: 2010/06/07 21:11:51

- Location: Texas, USA.

- Status: online

- Ribbons : 199

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/16 14:39:16

(permalink)

Dajinn08

So pointless. Boost is garbage. Just let me stay at the frequency I want if the temp is fine, jeez.

1080 ti kingpin can do that for ya.

|

EVGA_JacobF

EVGA Alumni

- Total Posts : 16946

- Reward points : 0

- Joined: 2006/01/17 12:10:20

- Location: Brea, CA

- Status: offline

- Ribbons : 26

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/16 15:21:11

(permalink)

GPU Boost is a great feature to get the max out of any GPU based on the temperature/application, etc. If it were not for GPU Boost, ALL cards would run at Base Clock only.

|

Dajinn08

New Member

- Total Posts : 18

- Reward points : 0

- Joined: 2018/03/21 18:11:24

- Status: offline

- Ribbons : 0

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/16 20:19:05

(permalink)

HeavyHemi

Valtrius Malleus

ty_ger07

GPU Boost 3.0 is not garbage. Boost is great. As said, it's self-overclocking. Even better, it's adjustable overclocking which changes as the the card's overclocking limits change.

If all you had was a slider -- like back in the good old days -- you could overclock it to 1950 MHz. But then lets say that you discover that your overclock works nicely until the card reaches 70°c, and then it crashes. So, you lower your overclock to 1925 MHz. Now it works without issue. See the problem? Your total overclock is now actually lower than it could have been with GPU Boost 3.0. With GPU Boost 3.0, it overclocks to 1950 MHz when it can, so you get extra FPS when you can, but lowers to 1925 MHz when it has to in order to keep the card from crashing. Better. Certainly.

The issue is that Boost 3.0 overcompensates so you're not getting a better overlock. It's just something else that's been dumbed down to appeal to the masses.

You must also realize that automatic clock control is of course going to have some margin. Otherwise RMA rates go through the roof. This is exactly the same concept that occurs with CPU. Pretty much any recent CPU will over clock by ~20%. But you have to do that yourself by trial and error. By MANUALLY adjusting for your individual part you can typically gain some performance. The same for a GPU. Also! These GPU's are designed to operate within a certain power draw. Increasing the clocks increasing power draw quite substantially. That's another purpose of GPU Boost 3.0. Even at my moderate overclock, I'm pulling ~300 watts peak. And lastly, of course, it is 'dumbed down for the masses'. Who do you think buys the vast majority of their products? The masses that just stick one in their machine, and that it. Sometimes, I think *enthusiasts have unrealistic expectations of what should be provided for a mass produced product.

Custom costs....did you consider the KingPin model?

I'm just not getting the comparison to CPUs here...yes, intel and amd have their own dynamic clocks (at stock settings) but that's where the similarities end. They don't dynamically throttle clocks down based on arbitrary temperature tiers when you start overclocking unless you count Tj max et al. Enthusiasts in that space typically have a good general idea of what safe thermal and voltage input limits are, as long as you stay within these and the cooler can adequately dissipate the thermal load, it's typically agreed that generally longevity is not really affected and the overclock/suitable for 24/7 load, which in this case I don't literally mean 100% CPU cycles 24 hours a day, rather that it's a safe configuration to run with as a daily driver without damaging or adversely affecting the components. Though I suspect electricians and engineers may find differences here. To me, I don't care whatever difference it makes that I have to run X voltage or Y voltage to get a stable CPU overclock if the temps are pretty much the same given an application load that's why I find the implementation of gpu boost weird and based on temps. At any rate, I think one of the posters earlier hit the nail on the head, you pretty much get throttled core clocks from 57c on, probably even earlier. None of my settings aside from memory really stick unless the temp is low.

post edited by Dajinn08 - 2018/04/16 20:21:51

|

HeavyHemi

Insert Custom Title Here

- Total Posts : 15665

- Reward points : 0

- Joined: 2008/11/28 20:31:42

- Location: Western Washington

- Status: offline

- Ribbons : 135

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/16 23:24:18

(permalink)

Dajinn08

HeavyHemi

Valtrius Malleus

ty_ger07

GPU Boost 3.0 is not garbage. Boost is great. As said, it's self-overclocking. Even better, it's adjustable overclocking which changes as the the card's overclocking limits change.

If all you had was a slider -- like back in the good old days -- you could overclock it to 1950 MHz. But then lets say that you discover that your overclock works nicely until the card reaches 70°c, and then it crashes. So, you lower your overclock to 1925 MHz. Now it works without issue. See the problem? Your total overclock is now actually lower than it could have been with GPU Boost 3.0. With GPU Boost 3.0, it overclocks to 1950 MHz when it can, so you get extra FPS when you can, but lowers to 1925 MHz when it has to in order to keep the card from crashing. Better. Certainly.

The issue is that Boost 3.0 overcompensates so you're not getting a better overlock. It's just something else that's been dumbed down to appeal to the masses.

You must also realize that automatic clock control is of course going to have some margin. Otherwise RMA rates go through the roof. This is exactly the same concept that occurs with CPU. Pretty much any recent CPU will over clock by ~20%. But you have to do that yourself by trial and error. By MANUALLY adjusting for your individual part you can typically gain some performance. The same for a GPU. Also! These GPU's are designed to operate within a certain power draw. Increasing the clocks increasing power draw quite substantially. That's another purpose of GPU Boost 3.0. Even at my moderate overclock, I'm pulling ~300 watts peak. And lastly, of course, it is 'dumbed down for the masses'. Who do you think buys the vast majority of their products? The masses that just stick one in their machine, and that it. Sometimes, I think *enthusiasts have unrealistic expectations of what should be provided for a mass produced product.

Custom costs....did you consider the KingPin model?

I'm just not getting the comparison to CPUs here...yes, intel and amd have their own dynamic clocks (at stock settings) but that's where the similarities end. They don't dynamically throttle clocks down based on arbitrary temperature tiers when you start overclocking unless you count Tj max et al.

Enthusiasts in that space typically have a good general idea of what safe thermal and voltage input limits are, as long as you stay within these and the cooler can adequately dissipate the thermal load, it's typically agreed that generally longevity is not really affected and the overclock/suitable for 24/7 load, which in this case I don't literally mean 100% CPU cycles 24 hours a day, rather that it's a safe configuration to run with as a daily driver without damaging or adversely affecting the components. Though I suspect electricians and engineers may find differences here. To me, I don't care whatever difference it makes that I have to run X voltage or Y voltage to get a stable CPU overclock if the temps are pretty much the same given an application load that's why I find the implementation of gpu boost weird and based on temps.

At any rate, I think one of the posters earlier hit the nail on the head, you pretty much get throttled core clocks from 57c on, probably even earlier. None of my settings aside from memory really stick unless the temp is low.

The temps are not arbitrary they are intentional. I wasn't arguing the method was identical, I was saying they are conceptually the same: automatic clock control. Changing that requires user intervention. I understand pretty well aware of how all this works, I am actually an EE, retired. Look, I get you don't like boost and if everyone WAS an enthusiast, that would be great. However, we are a tiny vocal minority, so the GPU is designed for the vast majority to plug and play and work. Enthusiasts have always been required to figured out ways around it. Whether it was FBS clocking, bclk, shorting jumpers, whatever. The KingPin is the enthusiast product. On my GPU, below 40C I am at 2050mhz, above 40C I stay dead solid at 2037 1.0v. You can do something similar if you just take the time to figure out where your curve is on your GPU. Later.

post edited by HeavyHemi - 2018/04/16 23:35:33

EVGA X99 FTWK / i7 6850K @ 4.5ghz / RTX 3080Ti FTW Ultra / 32GB Corsair LPX 3600mhz / Samsung 850Pro 256GB / Be Quiet BN516 Straight Power 12-1000w 80 Plus Platinum / Window 10 Pro

|

Valtrius Malleus

iCX Member

- Total Posts : 290

- Reward points : 0

- Joined: 2017/03/16 19:46:33

- Status: offline

- Ribbons : 2

Re: How exactly is GPU turbo 3.0 supposed to work?

2018/04/17 04:33:58

(permalink)

ty_ger07

Valtrius Malleus

ty_ger07

GPU Boost 3.0 is not garbage. Boost is great. As said, it's self-overclocking. Even better, it's adjustable overclocking which changes as the the card's overclocking limits change.

If all you had was a slider -- like back in the good old days -- you could overclock it to 1950 MHz. But then lets say that you discover that your overclock works nicely until the card reaches 70°c, and then it crashes. So, you lower your overclock to 1925 MHz. Now it works without issue. See the problem? Your total overclock is now actually lower than it could have been with GPU Boost 3.0. With GPU Boost 3.0, it overclocks to 1950 MHz when it can, so you get extra FPS when you can, but lowers to 1925 MHz when it has to in order to keep the card from crashing. Better. Certainly.

The issue is that Boost 3.0 overcompensates so you're not getting a better overlock. It's just something else that's been dumbed down to appeal to the masses.

Not in my experience. The initial boost may be a little low, sure. The initial power safety margin may have some want tor a little more aggressiveness, sure. But once you increase the power % where you want it and increase the boost % to the max stable, the way the boost drops as temperature increases seems just about right to me.

Try it for yourself. Boost to +100 (or whatever your max stable is) see the core frequency is 2000 at 50°c (or whatever it actually is), see that the core frequency drops to 1970 at 70°c (or whatever it actually is), and then without allowing core temperature to drop, boost to +130 (or whatever) so that core frequency is back to 2000 MHz (or whatever) at 70°c (or whatever). Crash. See? These are just example numbers, but my point should be clear and easily substituted for the max stable values of your video card to prove the point.

That's because it's not just the clocks that are throttling. It's the voltages too. You might be stable at +100 at 1.06v but the temperture throttles it back down to 1.05v or 1.04v and although it's the same offset (+100) it's not the same frequency. Adjusting the offset to +125 (or something) to maintain the same frequency as before but at a lower voltage is what causes the crash, not the temperature.

|